We build and host investment platforms for our amazing customers. Crucially our service covers core functions including investor onboarding and KYC as well as the investment process itself - important stuff indeed if you want to get deals done. But before any of that can happen candidate investors must be able to find your website and the offers you have available. This is where our work on search engine optimisation (SEO) becomes so important. The question invariably arises after we've demonstrated the investment process:

"Well, what do we do about SEO?"

What about SEO indeed. We take it seriously and we have built best practice SEO techniques into our investment platform.

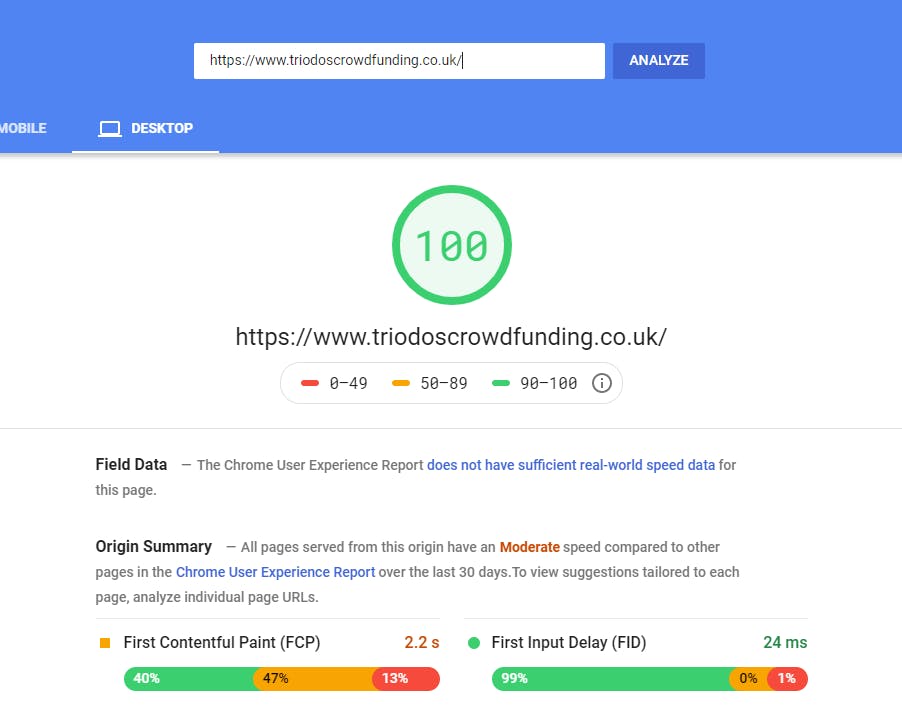

Web site speed

Perhaps the most important factors in SEO is page speed. When pages don't load quickly users don't engage. That much we know from first hand experiences I'm sure. But search engines also monitor websites for speed and are rewarded or penalised accordingly. When we first build your site we will benchmark it using Googles web page speed test tool. Sites score 90%+ or above before they are released. It's become one of the most important metrics to perform well in when being ranked by search engines.

We aim for 90% speed ranking before sites are released

We take a number of technical measures to make sites fast and together they add up to a fast end user experience.

Compression: All client rendered content is gzip compressed for faster transfer speeds. http protocols: All http requests are served over http2 or h2, a faster and more secure protocol. Server speed: We dedicate CPU and DTU so that application and database can process requests quickly. If resource thresholds are hit then we scale up. CDN: We serve static content from our own content delivery network (CDN) which serves request faster than the web server. Software design: Our application is designed to serve requests as and when needed, rather than pushing lots of unnecessary information to the client 'just in case'. This means we send fewer bytes over the wire on every page load.

Security

All sites are only available over https. We force all http request onto https. This is certainly important for security, but it's also important for SEO as search engines now penalise sites that are not https.

Blog

Unique and useful content is rewarded by search engines. Google and Bing have got really good at assessing content for it's 'human readability' and attempt to only index content that has real meaning to visitors. As such it's important that you can publish regular quality information to your audience.

All sites now have the option to include a blog on their site. It's a great way to keep your customers up to date with your company news and your take on the industry in general. It's also a great way to increase your 'digital footprint' - you will have more indexable content our there on the web that search engines can pick up; you're increasing your changes of catching 'long tail' search terms which can lead to new user registrations.

Here are a few great examples: https://www.crowdlords.com/news, https://www.shojin.co.uk/blog, https://www.triodoscrowdfunding.co.uk/news, https://www.energiseafrica.com/news

Page meta data

Search engines use meta data to make additional judgments about the content of web pages. Meta data is hidden in the code of web pages. It's a way for you to tell search engines about the intended audience of the content on a page. We include meta data tags in all pages that are accessible before user authentication is required. This data can be updated in the administration part of your site. Whilst it is important to fill out this data search engines will make a judgement call based on the whole content available to them to assess. Your keywords, title and description should be consistent with the content of your pages.

Sitemap.xml

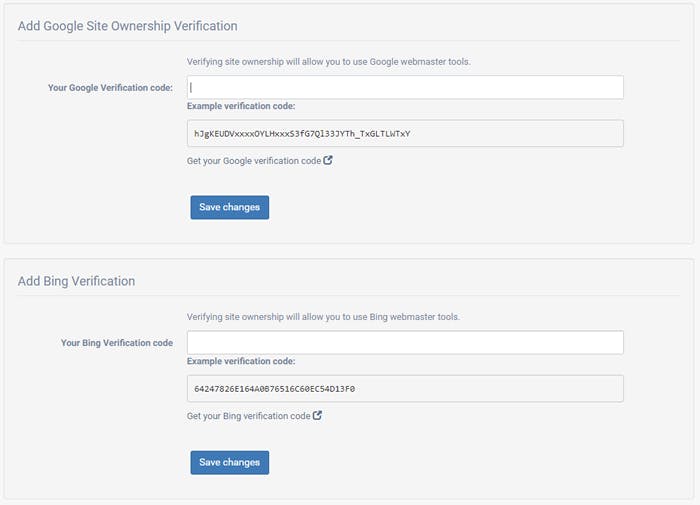

Search engines provide search consoles (Google https://search.google.com/search-console/about, Bing https://www.bing.com/toolbox/webmaster). These are useful tools and can let you monitor how well your website is being indexed. Once you have an account with these sites you can connect your website to them via your administration account by going to domains and settings.

An important part of the toolset is the ability to submit a sitemap to search engines. This is a file that you generate and contains a list of your webpages. This is your chance to tell search engines about pages it may not have discovered by indexing alone.

Every site we build has a sitemap.xml file available at the website root and is available to be submitted to the search engine search consoles as soon as you launch.

Index / Follow and Robots.txt

In addition to your sitemap.xml we also create a robots.txt file at the root of your site. This contains the full path of your sitemap.xml and controls which search engines are allowed to index your site (or not). This instructions are advisory only and can't be enforced. But for search engines search as Google and Bing you can tell them about pages you do not wish them to index. You can set this policy at a site wide level - this is useful to prevent indexing if you are soft launching or making some big changes to your site. You can also toggle noindex / nofollow on a per page basis as needed.

On page optimisation

Page HTML structure is also assessed when scoring pages fro ranking positions. We follow best practice on this e.g. a single H1 tag per page. But we also give you tools to directly modify page HTML so that you can fine tune the page HTML structure to meet search engine requirements.

The same tools let you track user conversion rates by adding gtags or similar event notification systems. Conversion rate optimisation is a topic for another post.

If you'd like to find out more about having your own direct investment platform that enables your business, then please get in touch and speak to one of the ShareIn team.